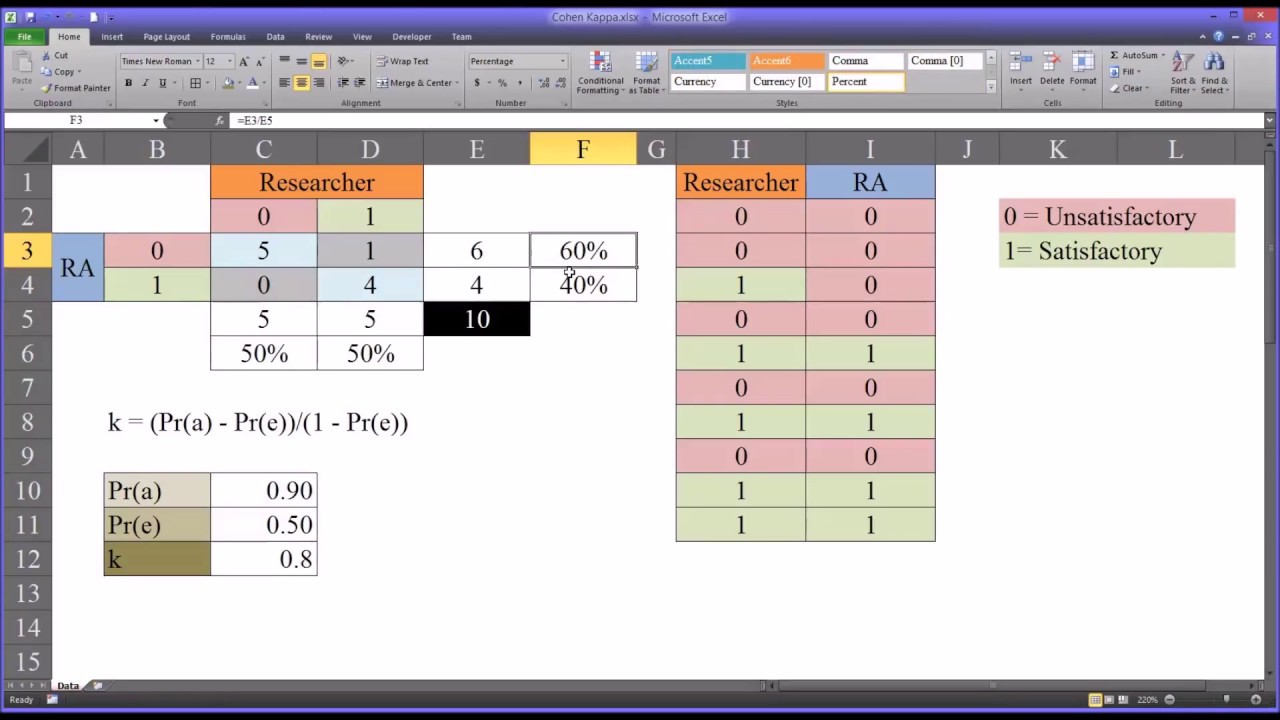

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar

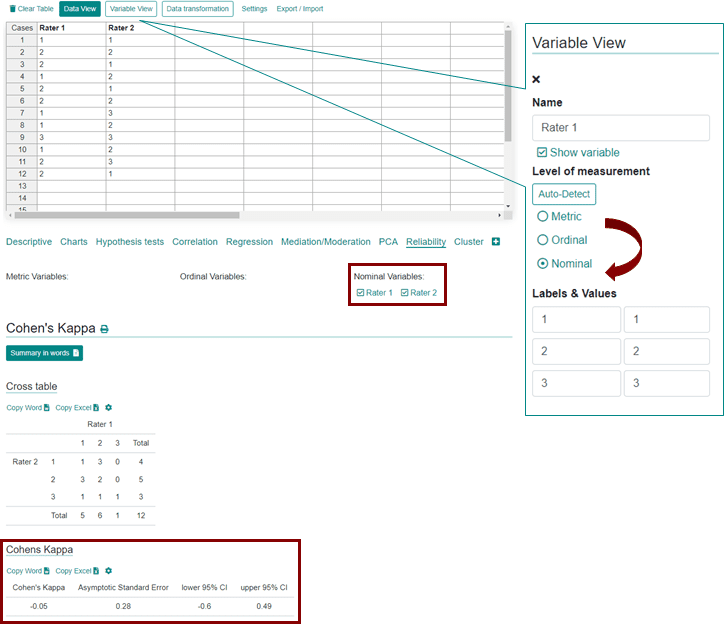

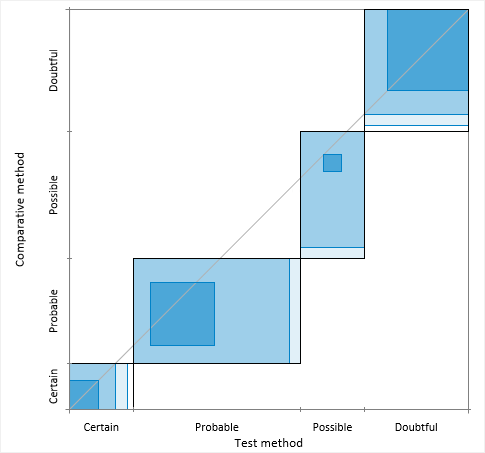

Agreement plot > Method comparison / Agreement > Statistical Reference Guide | Analyse-it® 6.10 documentation

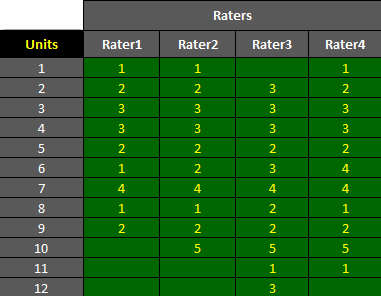

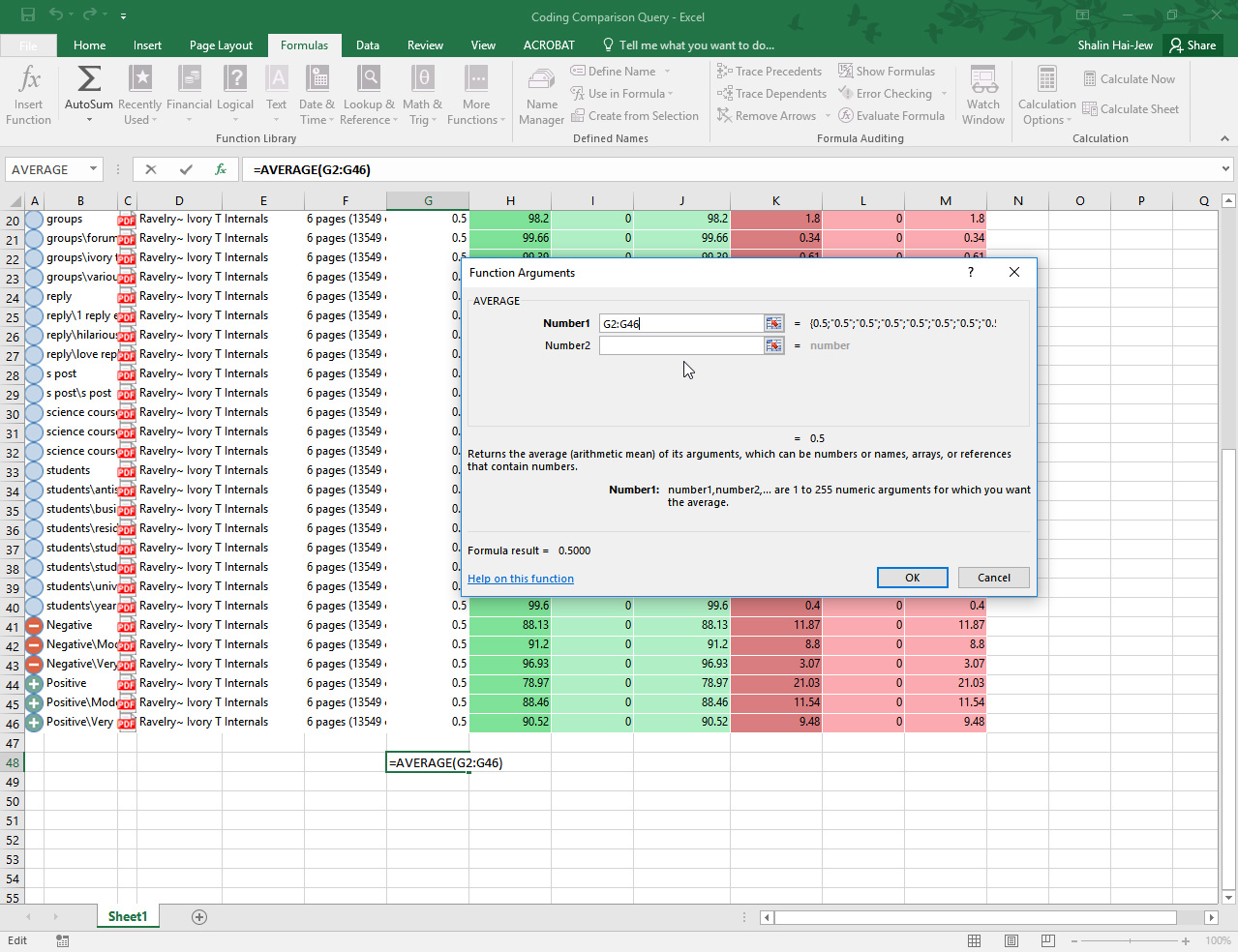

GitHub - Christian-TechUCM/Fleiss-Kappa: Python script that calculates Fleiss Kappa, a statistical measure of inter-rater agreement, on data from an Excel file.